How COVID Changed Content Moderation: Year in Review 2020

In a year that saw every facet of online life reshaped the coronavirus pandemic, online content moderation and platform censorship were no exception.

Twitter, Trump, and Tough Decisions: EU Freedom of Expression and the Digital Services Act

This blog post was co-written by Dr. Aleksandra Kuczerawy (Senior Fellow and Researcher at KU Leuven) and inspired by her publication at Verfassungsblog.

Rewriting Intermediary Liability Laws: What EFF Asks – and You Should Too

Rewriting the legal pillars of the Internet is a popular sport these days. Frustration at Big Tech, among other things, has led to a flurry of proposals to change long-standing laws, like Section 230, Section 512 of the DMCA, and the E-Commerce Directive, that help shield online intermediaries from potential liability for what their users say or do, or for their content moderation decisions.

EFF to Supreme Court: States Face High Burden to Justify Forcing Groups to Turn Over Donor Names

Throughout our nation’s history—most potently since the era of civil rights activism—those participating in social movements challenging the status quo have enjoyed First Amendment protections to freely associate with others in advocating for causes they believe in. This right is directly tied to our ability to maintain privacy over what organizations we choose to join or support financially. Forcing organizations to hand membership or donor lists to the state threatens First Amendment activities and suppresses dissent, as those named, facing...

The SAFE Tech Act Wouldn't Make the Internet Safer for Users

Section 230, a key law protecting free speech online since its passage in 1996, has been the subject of numerous legislative assaults over the past few years. The attacks have come from all sides. One of the latest, the SAFE Tech Act, seeks to address real problems Internet users experience, but its implementation would harm everyone on the Internet.

UN Human Rights Committee Criticizes Germany’s NetzDG for Letting Social Media Platforms Police Online Speech

A UN human rights committee examining the status of civil and political rights in Germany took aim at the country’s Network Enforcement Act, or NetzDG, criticizing the hate speech law in a recent report for enlisting social media companies to carry out government censorship, with no judicial oversight of content removal.The United National Human Rights Committee, which oversees the implementation of the United Nations International Covenant on Civil and Political Rights (ICCPR), expressed concerns, as we and others have, that...

EFF Tells Court to Protect Anonymous Speakers, Apply Proper Test Before Unmasking Them In Trademark Commentary Case

Judges cannot minimize the First Amendment rights of anonymous speakers who use an organization’s logo, especially when that use may be intended to send a message to the trademark owner, EFF told a federal appeals court this week.

EFF to Federal Court: Block Unconstitutional Texas Social Media Law

Users are understandably frustrated and perplexed by many big tech companies’ content moderation practices. Facebook, Twitter, and other social media platforms make many questionable, confounding, and often downright incorrect decisions affecting speakers of all political stripes.

New Texas Abortion Law Likely to Unleash a Torrent of Lawsuits Against Online Education, Advocacy and Other Speech

In addition to the drastic restrictions it places on a woman’s reproductive and medical care rights, the new Texas abortion law, SB8, will have devastating effects on online speech.

OnlyFans Content Creators Are the Latest Victims of Financial Censorship

Update (8/26/21): Victory! OnlyFans has reversed course and suspended its plans to ban sexually explicit content, saying it has “secured assurances necessary” from banking partners and payout providers to enable it to continue to serve all creators. We hope that financial institutions will take note that it is unacceptable to censor constitutionally protected legal speech by threatening to shut down access to financial services. EFF continues to actively fight financial censorship.

O (No!) Canada: Fast-Moving Proposal Creates Filtering, Blocking and Reporting Rules—and Speech Police to Enforce Them

Policymakers around the world are contemplating a wide variety of proposals to address “harmful” online expression. Many of these proposals are dangerously misguided and will inevitably result in the censorship of all kinds of lawful and valuable expression. And one of the most dangerous proposals may be adopted in Canada. How bad is it? As Stanford’s Daphne Keller observes, “It's like a list of the worst ideas around the world.” She’s right.

With Great Power Comes Great Responsibility: Platforms Want To Be Utilities, Self-Govern Like Empires

After decades of hype, it’s only natural for your eyes to skate over corporate mission-statements without stopping to take note of them, but when it comes to ending your relationship with them, tech giants’ stated goals take on a sinister cast.

EFF to First Circuit: Schools Should Not Be Policing Students’ Weekend Snapchat Posts

This blog post was co-written by EFF intern Haley Amster.

Turkey’s Free Speech Clampdown Hits Twitter, Clubhouse -- But Most of All, The Turkish People

EFF has been tracking the Turkish government’s crackdown on tech platforms and its continuing efforts to force them to comply with draconian rules on content control and access to users’ data. As of now, the Turkish government has now managed to coerce Facebook, YouTube, and TikTok into appointing a legal representative to comply with the legislation via threats to their bottom line: prohibiting Turkish taxpayers from placing ads and making payments to them if they fail to appoint a legal...

Indonesia’s Proposed Online Intermediary Regulation May be the Most Repressive Yet

Indonesia is the latest government to propose a legal framework to coerce social media platforms, apps, and other online service providers to accept local jurisdiction over their content and users’ data policies and practices. And in many ways, its proposal is the most invasive of human rights.

Facebook's Latest Proposed Policy Change Exemplifies the Trouble With Moderating Speech at Scale

Hateful speech presents one of the most difficult problems of content moderation. At a global scale, it’s practically impossible.

Can Government Officials Block You on Social Media? A New Decision Makes the Law Murkier, But Users Still Have Substantial Rights

It’s now common practice for politicians and other government officials to make major policy announcements on Twitter and other social media forums. That’s continuing to raise important questions about who can read those announcements, and what happens when people are blocked from accessing and commenting on important social media feeds. A new decision out of a federal appeals court affirms much of the public’s right to read and reply to these government communications, but muddies one particular, commonly occurring issue.

Fact-Checking, COVID-19 Misinformation, and the British Medical Journal

Throughout the COVID-19 pandemic, authoritative research and publications have been critical in gaining better knowledge of the virus and how to combat it. However, unlike previous pandemics, this one has been further exacerbated by a massive wave of misinformation and disinformation spreading across traditional and online social media.

Disentangling Disinformation: Not as Easy as it Looks

Body bags claiming that “disinformation kills” line the streets today in front of Facebook’s Washington, D.C. headquarters. A group of protesters, affiliated with “The Real Facebook Oversight Board” (an organization that is, confusingly, not affiliated with Facebook or its Oversight Board), is urging Facebook’s shareholders to ban so-called misinformation “superspreaders”—that is, a specific number of accounts that have been deemed responsible for the majority of disinformation about the COVID-19 vaccines.

Right or Left, You Should Be Worried About Big Tech Censorship

Claiming that “right-wing voices are being censored,” Republican-led legislatures in Florida and Texas have introduced legislation to “end Big Tech censorship.” They say that the dominant tech platforms block legitimate speech without ever articulating their moderation policies, that they are slow to admit their mistakes, and that there is no meaningful due process for people who think the platforms got it wrong.

Article 17 Copyright Directive: The Court of Justice’s Advocate General Rejects Fundamental Rights Challenge But Defends Users Against Overblocking

The Advocate General (AG) of the EU Court of Justice today missed an opportunity to fully protect internet users from censorship by automated filtering, finding that the disastrous Article 17 of the EU Copyright Directive doesn’t run afoul of Europeans’ free expression rights. The good news is that the AG’s opinion, a non-binding recommendation for the EU Court of Justice, defends users against overblocking, warning social media platforms and other content hosts that they are not permitted to automatically block...

Changing Section 230 Won’t Make the Internet a Kinder, Gentler Place

Tech platforms, especially the largest ones, have a problem—there’s a lot of offensive junk online. Many lawmakers on Capitol Hill keep coming back to the same solution—blaming Section 230.

Tracking Global Online Censorship: What EFF Is Doing Next

As the world stays home to slow the spread of COVID-19, communities are rapidly transitioning to digital meeting spaces. This highlights a trend EFF has tracked for years: discussions in virtual spaces shape and reflect societal freedoms, and censorship online replicates repression offline. As most of us spend increasing amounts of time in digital spaces, the impact of censorship on individuals around the world is acute.

Facebook's Policy Shift on Politicians Is a Welcome Step

We are happy to see the news that Facebook is putting an end to a policy that has long privileged the speech of politicians over that of ordinary users. The policy change, which was announced on Friday by The Verge, is something that EFF has been pushing for since as early as 2019.

Newly Released Records Show How Trump Tried to Retaliate Against Social Media For Fact-Checking

A year ago today, President Trump issued an Executive Order that deputized federal agencies to retaliate against online social media services on his behalf, a disturbing and unconstitutional attack on internet free expression.

Amid Systemic Censorship of Palestinian Voices, Facebook Owes Users Transparency

Over the past few weeks, as protests in—and in solidarity with—Palestine have grown, so too have violations of the freedom of expression of Palestinians and their allies by major social media companies. From posts incorrectly flagged by Facebook as incitement to violence, to financial censorship of relief payments made on Venmo, and the removal of Instagram Stories (which also heavily affected activists in Colombia, Canada, and Brazil), Palestinians are experiencing an unprecedented level of censorship during a time where digital...

Lawsuit Against Snapchat Rightfully Goes Forward Based on “Speed Filter,” Not User Speech

The U.S. Court of Appeals for the Ninth Circuit has allowed a civil lawsuit to move forward against Snapchat, a smartphone social media app, brought by the parents of three teenage boys who died tragically in a car accident after reaching a maximum speed of 123 miles per hour. We agree with the court’s ruling, which confirmed that internet intermediaries are not immune from liability when the harm does not flow from the speech of other users.

President Biden Revokes Unconstitutional Executive Order Retaliating Against Online Platforms

President Joe Biden on Friday rescinded a dangerous and unconstitutional Executive Order issued by President Trump that threatened internet users’ ability to obtain truthful information online and retaliated against services that fact-checked the former president. The Executive Order called on multiple federal agencies to punish private online social media services for content moderation decisions that President Trump did not like.

The Florida Deplatforming Law is Unconstitutional. Always has Been.

Last week, the Florida Legislature passed a bill prohibiting social media platforms from “knowingly deplatforming” a candidate (the Transparency in Technology Act, SB 7072), on pain of a fine of up to $250k per day, unless, I kid you not, the platform owns a sufficiently large theme park.

Facebook Oversight Board Affirms Trump Suspension -- For Now

Today’s decision from the Facebook Oversight Board regarding the suspension of President Trump’s account — to extend the suspension for six months and require Facebook to reevaluate in light of the platform’s stated policies — may be frustrating to those who had hoped for a definitive ruling. But it is also a careful and needed indictment of Facebook’s opaque and inconsistent moderation approach that offers several recommendations to help Facebook do better, focused especially on consistency and transparency. Consistency and transparency should...

EFF at 30: Protecting Free Speech, with Senator Ron Wyden

To commemorate the Electronic Frontier Foundation’s 30th anniversary, we present EFF30 Fireside Chats. This limited series of livestreamed conversations looks back at some of the biggest issues in internet history and their effects on the modern web.

Canada’s Attempt to Regulate Sexual Content Online Ignores Technical and Historical Realities

Canadian Senate Bill S-203, AKA the “Protecting Young Persons from Exposure to Pornography Act,” is another woefully misguided proposal aimed at regulating sexual content online. To say the least, this bill fails to understand how the internet functions and would be seriously damaging to online expression and privacy. It’s bad in a variety of ways, but there are three specific problems that need to be laid out: 1) technical impracticality, 2) competition harms, and 3) privacy and security.

Organizations Call on President Biden to Rescind President Trump’s Executive Order that Punished Online Social Media for Fact-Checking

President Joe Biden should rescind a dangerous and unconstitutional Executive Order issued by President Trump that continues to threaten internet users’ ability to obtain accurate and truthful information online, six organizations wrote in a letter sent to the president on Wednesday.

India’s Strict Rules For Online Intermediaries Undermine Freedom of Expression

India has introduced draconian changes to its rules for online intermediaries, tightening government control over the information ecosystem and what can be said online. It has created rules that seek to restrict social media companies and other content hosts from coming up with their own moderation policies, including those framed to comply with international human rights obligations. The new “Intermediary Guidelines and Digital Media Ethics Code” (2021 Rules) have already been used in an attempt to censor speech about the...

The EU Online Terrorism Regulation: a Bad Deal

On 12 September 2018, the European Commission presented a proposal for a regulation on preventing the dissemination of terrorist content online—dubbed the Terrorism Regulation, or TERREG for short—that contained some alarming ideas. In particular, the proposal included an obligation for platforms to remove potentially terrorist content within one hour, following an order from national competent authorities.

Content Moderation Is A Losing Battle. Infrastructure Companies Should Refuse to Join the Fight

It seems like every week there’s another Big Tech hearing accompanied by a flurry of mostly bad ideas for reform. Two events set last week’s hubbub apart, both involving Facebook. First, Mark Zuckerberg took a new step in his blatant effort to use 230 reform to entrench Facebook’s dominance. Second, new reports are demonstrating, if further demonstration were needed, how badly Facebook is failing at policing the content on its platform with any consistency whatsoever. The overall message is clear:...

Even with Changes, the Revised PACT Act Will Lead to More Online Censorship

Among the dozens of bills introduced last Congress to amend a key internet law that protects online services and internet users, the Platform Accountability and Consumer Transparency Act (PACT Act) was perhaps the only serious attempt to tackle the problem of a handful of dominant online services hosting people’s expression online.

Facebook’s Pitch to Congress: Section 230 for Me, But not for Thee

As Mark Zuckerberg tries to sell Congress on Facebook’s preferred method of amending the federal law that serves as a key pillar of the internet, lawmakers must see it for what it really is: a self-serving and cynical effort to cement the company’s dominance.

Political Satire Is Protected Speech – Even If You Don’t Get the Joke

This blog post was co-written by EFF Legal Fellow Houston Davidson.

Beyond Platforms: Private Censorship, Parler, and the Stack

Last week, following riots that saw supporters of President Trump breach and sack parts of the Capitol building, Facebook and Twitter made the decision to give the president the boot. That was notable enough, given that both companies had previously treated the president, like other political leaders, as largely exempt from content moderation rules. Many of the president’s followers responded by moving to an alternative platform, Parler. This week, the response has taken a new turn by targeting. Infrastructure companies...

EFF's Response to Social Media Companies' Decisions to Block President Trump’s Accounts

Like most people in the United States and around the world, EFF is shocked and disgusted by Wednesday’s violent attack on the U.S. Capitol. We support all those who are working to defend the Constitution and the rule of law, and we are grateful for the service of policymakers, staffers, and other workers who endured many hours of lockdown and reconvened to fulfill their constitutional duties. The decisions by Twitter, Facebook, Instagram, Snapchat, and others to suspend and/or block President...

What Comes Next for the Santa Clara Principles: 2020 in Review

For many years, we have urged platforms to operate with more transparency—both to the public and to their users—and to ensure that the people who use their services have the ability to appeal wrongful content moderation decisions. As such, in conjunction with several other organizations and academic experts, we launched the Santa Clara Principles on Transparency and Accountability in Content Moderation in February 2018 on the sidelines of an event on content moderation at Santa Clara University to make our...

How COVID Changed Content Moderation: Year in Review 2020

In a year that saw every facet of online life reshaped the coronavirus pandemic, online content moderation and platform censorship were no exception.

European Commission’s Proposed Digital Services Act Got Several Things Right, But Improvements Are Necessary to Put Users in Control

The European Commission is set to release today a draft of the Digital Services Act, the most significant reform of European Internet regulations in two decades. The proposal, which will modernize the backbone of the EU’s Internet legislation—the e-Commerce Directive—sets out new responsibilities and rules for how Facebook, Amazon, and other companies that host content handle and make decisions about billions of users’ posts, comments, messages, photos, and videos. This is a great opportunity for the EU to reinvigorate principles...

It’s Not Section 230 President Trump Hates, It’s the First Amendment

President Trump’s recent threat to “unequivocally VETO” the National Defense Authorization Act (NDAA) if it doesn’t include a repeal of Section 230 may represent the final attack on online free speech of his presidency, but it’s certainly not the first. The NDAA is one of the “must-pass” bills that Congress passes every year, and it’s absurd that Trump is using it as his at-the-buzzer shot to try to kill the most important law protecting free speech online. Congress must reject...

Publisher or Platform? It Doesn't Matter.

“You have to choose: are you a platform or a publisher?”

Section 230 is Good, Actually

Even though it’s only 26 words long, Section 230 doesn’t say what many think it does.

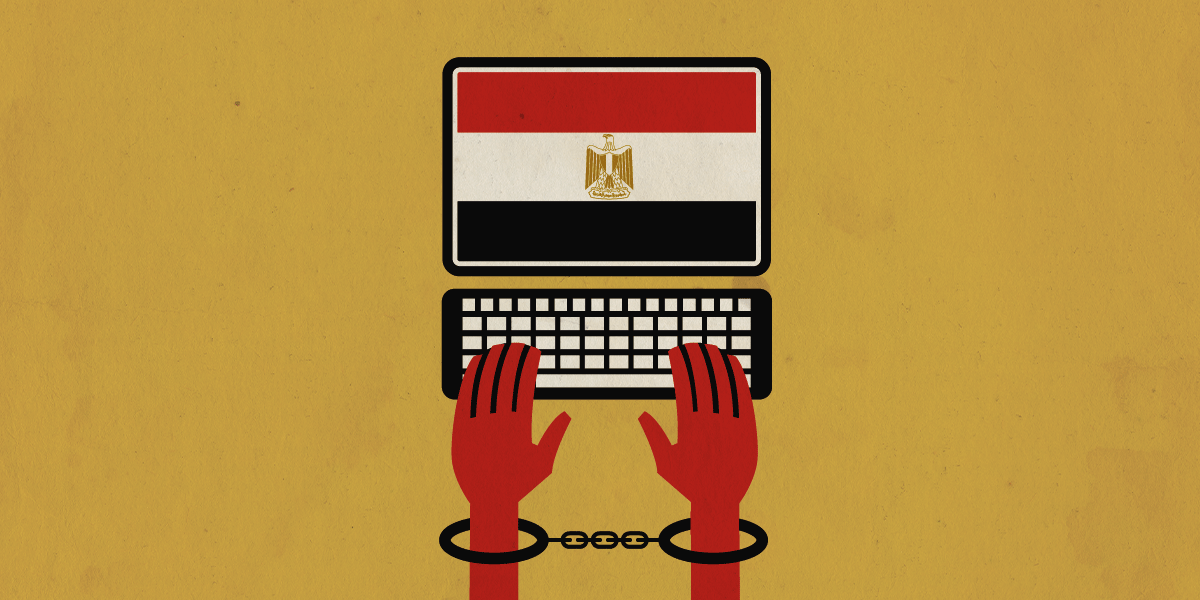

EFF Condemns Egypt's Latest Crackdown

We are quickly approaching the tenth anniversary of the Egyptian revolution, a powerfully hopeful time in history when—despite all odds—Egyptians rose up against an entrenched dictatorship and shook it from power, with the assistance of new technologies. Though the role of social media has been hotly debated and often overplayed, technology most certainly played a role in organizing and Egyptian activists demonstrated the potential of social media for organizing and disseminating key information globally.

Victory! Court Protects Anonymity of Security Researchers Who Reported Apparent Communications Between Russian Bank and Trump Organization

Update: On May 19, 2021, an Indiana appellate court dismissed Alfa Bank's appeal of the trial court order denying its effort to unmask the anonymous researchers on grounds that it lacked jurisdiction to hear the appeal. EFF filed an amicus brief with the appellate court in support of the researchers.

Don’t Blame Section 230 for Big Tech’s Failures. Blame Big Tech.

Next time you hear someone blame Section 230 for a problem with social media platforms, ask yourself two questions: first, was this problem actually caused by Section 230? Second, would weakening Section 230 solve the problem? Politicians and commentators on both sides of the aisle frequently blame Section 230 for big tech companies’ failures, but their reform proposals wouldn’t actually address the problems they attribute to Big Tech. If lawmakers are concerned about large social media platforms’ outsized influence on...

Now and Always, Platforms Should Learn From Their Global User Base

The upcoming U.S. elections have invited broad attention to many of the questions with which civil society has struggled for years: what should companies do about misinformation and hate speech? And what, specifically, should be done when that speech is coming from the world’s most powerful leaders?